Press Release

ZF ProAI: Autonomous Driving Soon a Reality with Artificial Intelligence

- ZF exhibits test vehicle with extensive sensor set and AI-capable ZF ProAI control box at CES 2018

- Modular approach for highly and fully automated driving

- Continuous expansion and scalability of hardware and functions

Friedrichshafen/ Las Vegas. At CES 2018, ZF is presenting its next steps on the road to autonomous driving. Engineers from ZF’s predevelopment team have implemented numerous driving functions in a test vehicle enabling level 4, fully automated driving. With this, ZF is demonstrating extensive expertise as a system architect for autonomous driving and in particular for detecting and processing environmental data. The advanced engineering project also demonstrates the efficiency and practicality of ZF’s supercomputer - the ProAI - presented just a year ago by ZF and NVIDIA. It acts as a central control unit within the test vehicle and with this, ZF is taking a modular approach to the development of automated driving functions. The goal is a system architecture that can be applied to any vehicle and tailored according to the application, the available hardware and the desired level of automation.

Implementing developments for the respective automation levels is an industry-wide challenge: “The vast field of automated driving is the sum of many individual driving functions that a car must be able to handle without human intervention. And, it has to do that reliably, in different weather, traffic and visibility conditions,” said Torsten Gollewski, head of Advanced Engineering at ZF Friedrichshafen AG.

System architect for needs-based automation

As part of the test vehicle, ZF has set up a complete, modular development environment including functional architecture with artificial intelligence. “For example, we implemented a configuration for fully automated, that is, level 4 driving functions. The configuration's modules can be adapted to the specific application according to ZF's 'see-think-act' approach - helping vehicles to have the necessary visual and thinking skills for urban traffic,” says Gollewski. “The flexible architecture also allows for other automation levels in a wide variety of vehicles. At the same time, it provides information about which minimum hardware configuration is essential for which level.”

In recent months, ZF’s engineers have “trained” the vehicle to perform different driving functions. Particular focus was placed on urban environments such as interaction with pedestrians and pedestrian groups at crosswalks, collision estimation, behavior at traffic lights and roundabouts. “In contrast to a trip on a freeway or rural road, it is significantly more complex in urban scenarios to create a reliable understanding of the current traffic situation, which provides the basis for appropriate actions of a computer-controlled vehicle,” says Gollewski.

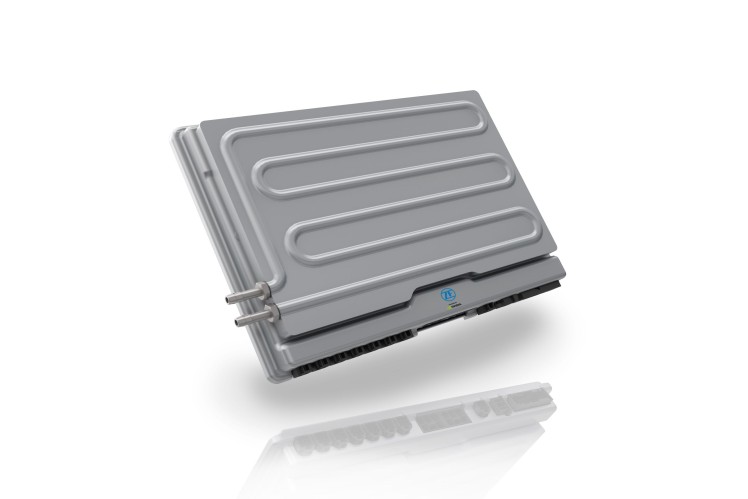

Thinking on demand with ZF ProAI

With its open architecture, ZF ProAI is scalable – the hardware components, connected sensor sets, evaluation software and functional modules can be adapted to the desired purpose and degree of automation. For example, ZF ProAI can be configured in terms of computer performance for almost any specific requirement profile. In the application shown at CES, the control unit uses the Xavier chip with 8-core CPU architecture, 7 billion transistors and the corresponding performance data. It manages up to 30 trillion operations per second (TOPS) with a power consumption of only 30 watts. The chip complies with the strictest standards for automotive applications – just like ZF ProAI itself – creating the conditions for artificial intelligence and deep learning.

Data interaction

The comprehensive sensor set from ZF and its partner network play animportant role in keeping an eye on the environment. Cameras, LIDAR and radar sensors are installed in the current vehicle. They help to enable a complete 360-degree understanding of the test vehicle’s surroundings, updated every 40 milliseconds. This enormous flood of data – one camera alone generates one gigabit per second – is analyzed in real time by the ProAI’s computing unit. “Artificial intelligence and deep-learning algorithms are used primarily to accelerate the analysis and to make the recognition more precise. It’s about recognizing recurring patterns in traffic situations from the flood of data, such as a pedestrian trying to cross the road,” says Gollewski. The possible reactions of the vehicle that are then retrieved, which are decisive for the calculation of the longitudinal acceleration or deceleration as well as the further direction of travel, are then firmly saved in the software.

Exhibition vehicle in Las Vegas “dreams” a drive through Friedrichshafen

This can also be experienced at the trade fair stand at CES. ZF provides the static vehicle in Las Vegas with sensor data obtained during a live test drive between the ZF Company headquarters and the research and development center in Friedrichshafen, Germany. The vehicle – or moreover, the ZF ProAI – interprets this data in real time as if it were following the route live. Its actions, such as steering, braking and acceleration, which are visible at the exhibition stand, correspond exactly to the route 9,200 kilometers away – as if the car was imagining itself to be driving on the other continent.